Published on November 26, 2025

HaptXDeep turns robots into apprentices

At the Institute for Material Handling and Logistics (IFL) at KIT, an artificial intelligence (AI)-based system is currently being developed which could fundamentally change the way robots learn. The HaptXDeep project, funded by the Innovation Campus Mobility of the Future (ICM), combines sensitive hardware with adaptive software and intuitive human-machine interaction. "Our vision is that a robot can be trained just as quickly as a new human employee. For example, when a new person is trained in a company, supervisors or authorized personnel may need a few hours to show the new task to an apprentice. In principle, this should also be possible for robot implementation," explains Edgar Welte, doctoral researcher at the IFL. What currently takes weeks or even months should be possible in just a few hours with HaptXDeep, without any robotics expertise.

Manipulation by hand signals

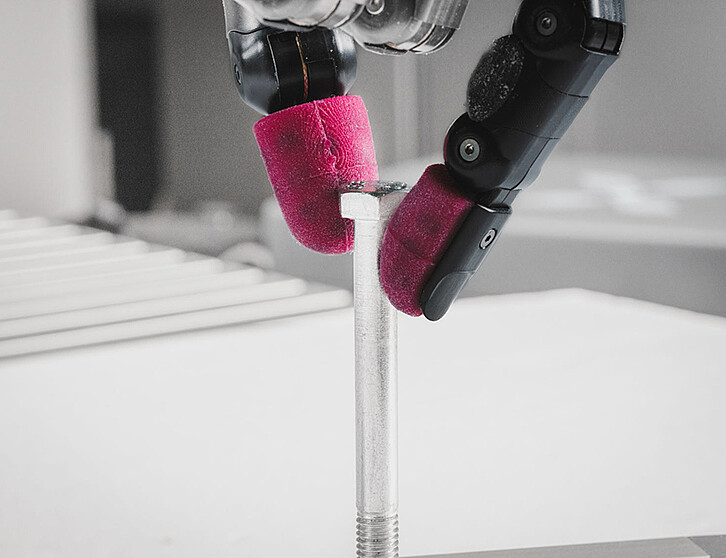

As part of the HaptXDeep project, a unique robot system was procured to test the AI. The first core element of the system is a 5-fingered robotic hand from Shadow Robot with twenty degrees of freedom, made possible by twenty individually controllable articulations. This enables the artificial hand to perform complex gripping movements that closely resemble those of the human hand. The robotic hand is mounted on a so-called cobot – a collaborative robotic arm that can work alongside humans without a protective fence. It is also equipped with highly sensitive tactile sensors that capture precise force and touch information. The system is controlled by users via a haptic glove from HaptX, the second core element, that not only transmits movements to the robot but also gives the wearer tactile feedback. "When I use the glove or the robot hand as an ‘extended arm’ to grab a water bottle, for example, I feel the resistance that the robot fingers feel when they come into contact with the bottle. To achieve this, there are twelve small air chambers in the fingertips of the glove, which inflate when the robot hand touches an object. And we use this information to train our AI system so that the robot can evaluate the tactile feedback generated by the objects and adapt its grip accordingly," explains Welte.

Learning by demonstration

The learning process runs in two technically challenging phases. First, a domain expert – someone who is very familiar with the task – demonstrates the task several times while wearing the teleoperative glove. During this demonstration, the robot hand moves in parallel and collects data on movements, forces, and positions. Welte clarifies: "Instead of just observing humans and learning from them, we make sure that the robot performs the task itself. The human demonstrates, the robot learns – and both work together. The concept of imitation has the advantage that the robot senses and ‘feels’ how to perform the task itself via sensors." Professor Rania Rayyes, who investigates the synergies of artificial intelligence and robotics for interactive robot manipulation at the IFL, adds: "In our planned user study, we want to test whether this type of teleoperation is actually very intuitive. We expect that test persons without in-depth expert knowledge will have no great difficulty in remotely controlling the robot. Here, we are also looking at how well the tasks have to be demonstrated in order to still achieve good results."

In the second phase, the robot attempts to perform the task independently. Mistakes are part of the concept. The robot is allowed to fail – and is then corrected by humans. "The trainer stands by and closely observes the individual steps. If an inaccuracy or error occurs, the trainer corrects it briefly and lets the robot continue. The robot can use these corrections to continue learning," says the developer, describing the intuitive process.

When a few examples are enough

In total, this process takes only a few hours. The simple parts of the task do not have to be redone a thousand times. The interactive corrections are even more valuable because they focus precisely on the areas where the task is difficult. "The bottlenecks in the task automatically emerge. The correction runs give us more data in the difficult areas and less data in the remaining areas. This results in a useful amount of data for the learning process," says Welte. There is always a certain degree of variance in the movement that the system has to deal with. The keyword here is generalization: "Generalization describes the ability of a model to not only memorize the training data, but also to react correctly to new, unknown data. On the one hand, the demonstrated sequences are generalized, and on the other hand, the robot's performance is generalized. The self-learning algorithms we are developing enable the system to generalize with just a few demonstrations – even if the shape, color, or material of the object changes."

Why robots need to re-think

The challenge lies in the diversity of modern production environments. While classic industrial robots repeat the same tasks for decades, the circular economy is creating completely new requirements. Prof. Rayyes shares some insights: "Robots are often still programmed in the traditional way for a specific, defined task. Especially in the advancing circular economy, where components are of different ages, damaged, or modified, completely new requirements are emerging as a result of remanufacturing and recycling. Here, robots have to deal with different product generations and conditions. For this reason, KIT is running a project called ‘SFB1574 – Circular Factory for the Eternal Product’, in which we are involved and are researching how robot systems can quickly adapt to new tasks and environments in remanufacturing." The possible applications are diverse: assembly, disassembly, object manipulation – anywhere where flexibility and fine motor skills are required. "In view of the current challenges of the industrial circular economy and demographic change, we want to implement intuitive robot programming through human demonstrations and corrections, while at the same time enabling adaptive robots through advanced AI systems that can adapt to dynamic manipulation tasks with the help of modern technologies such as HaptXDeep," concludes Prof. Rayyes.

comments about this article

No Comments